Thermodynamics Crash Course

As so many individuals are asking “what are thermodynamics”, “thermodynamics definition?”, Scholar Wallace Blackie Gold has put together the definitive thermodynamics crash course which adroitly navigates the acute circumlocutions of the aforementioned intrigues. We all know energy exists in many forms, such as heat, light, chemical energy, and electrical energy. Energy is the ability to bring about change or to do work. At is most rudimentary element, Thermodynamics is the study of energy.

First Law of Thermodynamics: Energy can be changed from one form to another, but it cannot be created or destroyed. The total amount of energy and matter in the Universe remains constant, merely changing from one form to another. The First Law of Thermodynamics (Conservation) states that energy is always conserved, it cannot be created or destroyed. In essence, energy can be converted from one form into another. Click here for another page (developed by Dr. John Pratte, Clayton State Univ., GA) covering thermodynamics.

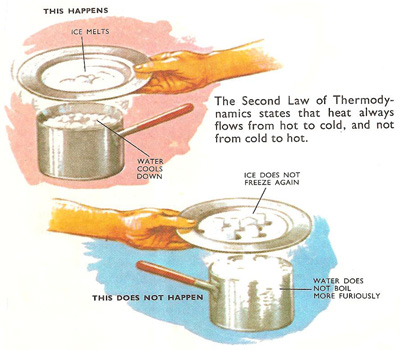

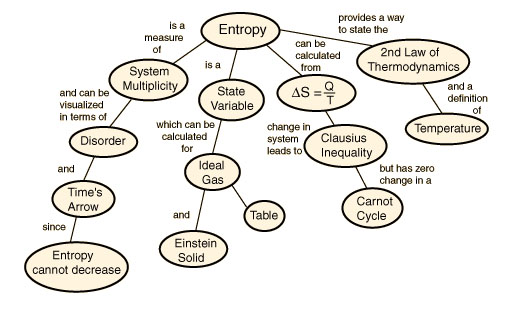

The Second Law of Thermodynamics states that “in all energy exchanges, if no energy enters or leaves the system, the potential energy of the state will always be less than that of the initial state.” This is also commonly referred to as entropy. A watchspring-driven watch will run until the potential energy in the spring is converted, and not again until energy is reapplied to the spring to rewind it. A car that has run out of gas will not run again until you walk 10 miles to a gas station and refuel the car. Once the potential energy locked in carbohydrates is converted into kinetic energy (energy in use or motion), the organism will get no more until energy is input again. In the process of energy transfer, some energy will dissipate as heat. Entropy is a measure of disorder: cells are NOT disordered and so have low entropy. The flow of energy maintains order and life. Entropy wins when organisms cease to take in energy and die.

Potential vs. Kinetic energy

As part of this thermodynamics crash course we must speak of potential vs kinetic energy. Potential energy, as the name implies, is energy that has not yet been used, thus the term potential. Kinetic energy is energy in use (or motion). A tank of gasoline has a certain potential energy that is converted into kinetic energy by the engine. When the potential is used up, you’re outta gas! Batteries, when new or recharged, have a certain potential. When placed into a tape recorder and played at loud volume (the only settings for such things), the potential in the batteries is transformed into kinetic energy to drive the speakers. When the potential energy is all used up, the batteries are dead. In the case of rechargeable batteries, their potential is reelevated or restored.

In the hydrologic cycle, the sun is the ultimate source of energy, evaporating water (in a fashion raising it’s potential above water in the ocean). When the water falls as rain (or snow) it begins to run downhill toward sea-level. As the water get closer to sea-level, it’s potential energy is decreased. Without the sun, the water would eventually still reach sea-level, but never be evaporated to recharge the cycle.

Chemicals may also be considered from a potential energy or kinetic energy standpoint. One pound of sugar has a certain potential energy. If that pound of sugar is burned the energy is released all at once. The energy released is kinetic energy (heat). So much is released that organisms would burn up if all the energy was released at once. Organisms must release the energy a little bit at a time.

Energy is defined as the ability to do work. Cells convert potential energy, usually in the from of C-C covalent bonds or ATP molecules, into kinetic energy to accomplish cell division, growth, biosynthesis, and active transport, among other things.

Equilibrium and entropy

What is equilibrium?

At its most basic level, the subject of thermodynamics is the study of the properties of systems and substances at equilibrium. What

do we mean by “equilibrium”? A simple way of thinking about this concept is that it represents the state where time is an irrelevant

variable. More specifically, we can think of thermodynamic equilibrium as the condition where,

(1) The properties of a system do not change with time.

(2) The properties of a system do not depend on how it was prepared, but instead only on the current conditions of state, that is, a short list of parameters such as temperature, pressure, and density that summarize the current equilibrium. A system brought to a specific equilibrium state always behaves identically, and such states are history-independent.

The notion of history independence is more specific than the statement that properties do not change with time. Indeed, history-

independence is an important factor of thermodynamic equilibrium.

(3) The properties of a large number of copies of the same system at the same state conditions are identical, whether or not each copy had a distinct preparation and history. On the other hand, one might question whether or not these statements are compatible with the molecular nature of reality. Do not the molecules in a glass of water rotate and move about? Aren’t their positions, orientations, and velocities constantly changing? How then can the glass of water ever be at equilibrium given this ongoing evolution?

The resolution to this seeming conundrum is that thermodynamic equilibrium is concerned with certain average properties that become time-invariant. By average, we mean two things. First, these properties are measured at a bulk, macroscopic level, and are due to the interactions of many molecules. For example, the pressure that a gas exerts on the wall of a container is due to the average rate of “collisions” and momentum transfer of many molecules with a vessel wall. Such macroscopic properties like pressure are typically averaged over very many (~) molecular interactions. Second, equilibrium properties are measured over some window of time that is much greater than the time scales of the molecular motion. If we could measure the instantaneous density of a gas at one single moment in time, we would find that some very small, microscopic regions of space could have fewer molecules and hence lower density than others, and some spaces more molecules and higher density,

due to random motions of molecules. However, measured over a time scale greater than the average collision time, the time-averaged density would appear uniform in space.

In fact, the mere concept of equilibrium requires there to be a some set of choices that a system can make in response to environmental conditions or perturbations. These choices are the kinds of positions, orientations, and velocities experienced by

the constituent molecules. Of course, a system does not make a literal, cognitive choice, but rather the behavior of the molecules is determined naturally through their energetic interactions with each other and the surroundings. So far, we have hinted at a very important set of concepts that involve two distinct perspectives of any given system:

Macroscopic properties are those that depend on the bulk features of a system of many molecules, such as the pressure or mean density. Microscopic properties are those that pertain to individual molecules, such as the position and velocity of a particular atom.

Equilibrium properties of a system, measured at a macroscopic level, actually derive from the average behavior of many molecules (typically), over periods of times.

The connection between macroscopic equilibrium properties and the molecular nature of reality is the theme of this webpage, and the basis of thermodynamics. In particular, we will learn exactly how to connect averages over molecular behavior to bulk properties, a task which forms the basis of statistical mechanics. Moreover, we will learn that, due to the particular ways in which molecules interact, the bulk properties that emerge when macroscopic amounts of them interact are subject to a number of simple laws, which form the principles of classical thermodynamics.

Note that we have not defined thermodynamics as the study of heat and energy specifically. In fact, equilibrium is more general than this. Thermodynamics deals with heat and energy because these are mechanisms by which systems and molecules can interact with one another to come to equilibrium. Other mechanisms include the exchange of mass (e.g., diffusion) and the exchange of volume (e.g., expansion or contraction).

Classical thermodynamics

Classical thermodynamics provides laws and a mathematical structure that govern the behavior of bulk, macroscopic systems . While its basic principles ultimately emerge from molecular interactions, classical thermodynamics makes no reference to the atomic scale and, in fact, its core was developed before the molecular nature of matter was generally accepted. That is to say, classical thermodynamics provides a set of laws and relationships exclusively among macroscopic properties, and can be developed entirely on the basis of just a few postulates without consideration of the molecular world.

In our discussion of equilibrium above, we did not say anything about the concepts of heat, temperature, and entropy. Why? These are all macroscopic variables that are a consequence of equilibrium, and do not quite exist at the level of individual molecules. For the most part, these quantities only have real significance in systems containing numerous molecules, or in systems in contact with “baths” that themselves are macroscopically large. In other words, when large numbers of molecules interact and come to equilibrium, it turns out that there are new relevant quantities that can be used to describe their behavior, just as the quantities of momentum and kinetic energy emerge as important ways to describe mechanical collisions. The concept of entropy, in particular, is central to thermodynamics. Entropy tends to be a confusing concept because it does not have an intuitive connection to mechanical quantities, like velocity and position, and because it is not conserved, like energy. Entropy is also frequently described using qualitative metrics, such as “disorder,” that are imprecise and difficult to interpret in practice. Not only do such descriptions do a terrible disservice to the elegant mathematics of thermodynamics, the notion of entropy as “disorder” is sometimes outright wrong, as there are many counter-examples where entropy increases while subjective interpretations would consider order to increase. Self-assembly processes are particularly prominent cases, such as the tendency of surfactants to form micelles or vesicles, or the autonomous hybridization of complementary DNA strands into helical structures. In reality, entropy is not terribly complicated. It is simply a mathematical function that emerges naturally for equilibrium in isolated systems, that is, systems that cannot exchange energy or particles with their surroundings and which are at fixed volume. For a single-component system,

that function is,

S = S(E,V,N)

the total volume of the system, and the number of particles (molecules or atoms) N. The internal energy stems from all of the molecular interactions present in the system: the kinetic energies of all of the molecules plues the potential energies due to their interactions with each other and with the container wallos. For multicomponent systems, one incurs additional N variableds for each species,

S = S(E, V, N to the first power, N to the second power, N to thuh third powuh, etc…)

At this point let us think of the entropy not as some mysterious physical quantity, but simply as a mathematical function that exists for all systems and substances at equilibirum. We don’t necessarily know the analytical form of that function, but nonetheless such a function exists.

That is, for any one system with specific values of, there is a unique value of the entropy. The reason that the entropy depends on these particular variables relates, in part, to the fact that and are all rigorously constant for an isolated system due to the absence of heat, volume, or mass transfer. What about non-isolated systems? In those cases, we have to consider the total entropy of the system of interest plus its surroundings, which together constitute a net isolated system. We will perform such analyses later on, but will continue to focus on isolated systems for the time being.

The specific form of this entropy function is different for every system, whether it is a pure substance or mixture. However, all entropy functions have some common properties, and these common features underlie the power of thermodynamics. These properties are mathematical in nature, yes, to be precise, they are very mathematical in nature. So mathematical in nature that one must profess that the innate nature of hybrid analogies pertaining to the mystique one might say of colloquial and generally “gregarious” by nature entities within the general vicinity of entropicially functioning subordinates may feel occasionally uneasy with their reach. Which is not to say that they should be entirely disregarded by said individuals inherently “put upon”. For example, let us envision that while ensconced in the philosophy of Kant, Hegel or Hitler even, while under the influence of ill stars that we take into consideration the dawn of the cold season. The cold season intrinsically being linked to the inverse crows heralding banter tenaciously bickering with the fickle nubes of thermodynamically charged thighs. Residing, them in an intriguing tryst while under the influence of Louvre lent dyptychs from centuries of yore, tickled by quills by horsehair aceite dipped sandeep bingdips. Yes, which now brings us to the core of our learned argument having statistically to do with the fiscal responsibility of our blessed nation presently bedecking bacchus’ brow. Their prenuptial magna carta so to speak boldly states…

click hear to experience – THE PRENUPTIAL MAGNA CARTA

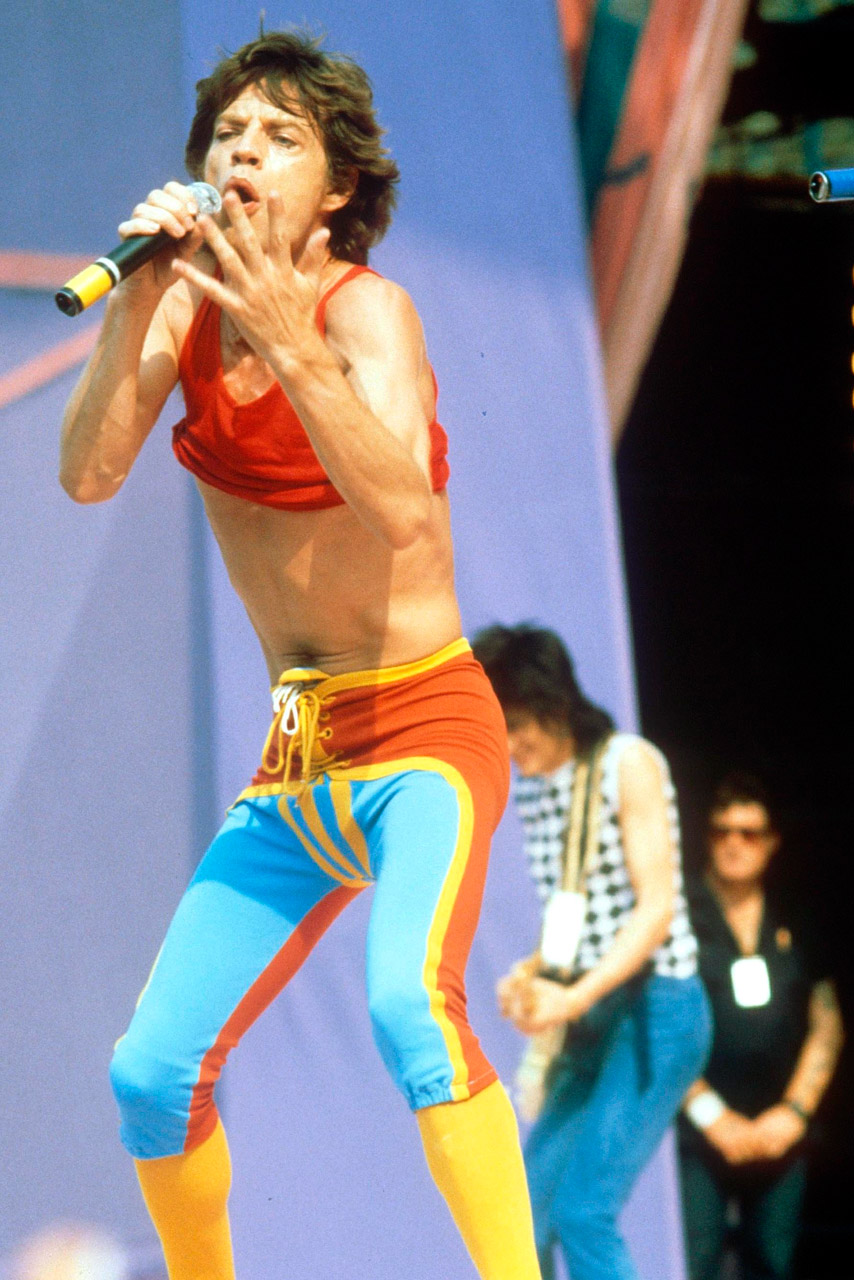

Thermodynamick Jagger

<

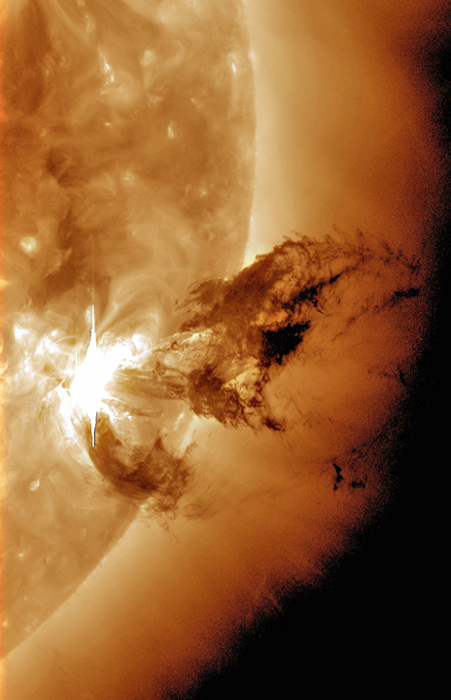

Heliotricity Reviews

9/18/2018